# DolphinScheduler Standalone安装指南

DolphinScheduler Standalone介质说明

apache-dolphinscheduler-7.1.0-standalone-bin.tar.gz

├── bin #配置相关环境变量信息、数据库连接信息等

├── lincense #协议

└── standalone-server #standalone server负责作业定时调度以及任务下发

安装前准备及注意事项:

1、请确保机器已配置 JDK 1.8.0_251 及以上。

2、已创建免密用户、配置用户免密及权限,参考 DWS安装必读

3、安装进程树分析命令,不同操作系统的安装方式不同。

pstreepsmisc,如:yum install psmiscsudo -u {linux-user} 切换不同 linux 用户的方式来实现多租户运行作业,所以部署用户需要有 sudo 权限。

# 1. 解压

tar -zxvf apache-dolphinscheduler-7.1.0-standalone-bin.tar.gz

# 2.修改目录权限

使用户对二进制包解压后的apache-dolphinscheduler-*-bin 目录有操作权限

chown -R dws:dws apache-dolphinscheduler-7.1.0-standalone-bin

# 3. 修改配置文件

# 3.1 修改 bin/env/dolphinscheduler_env.sh 文件

dolphinscheduler_env.sh 描述了下列配置:

- DolphinScheduler 的数据库配置

- 一些任务类型外部依赖路径或库文件,如

JAVA_HOME和SPARK_HOME都是在这里定义的 - 注册中心

jdbc - 服务端相关配置,比如缓存,时区设置等

#修改JAVA_HOME、DATABASE

export JAVA_HOME=${JAVA_HOME:-/opt/jdk1.8.0_251}

#参数值使用对应的数据库,mysql、postgresql、Oracle、gaussDB、DM

export DATABASE=${DATABASE:-mysql}

export SPRING_PROFILES_ACTIVE=${DATABASE}

# 配置数据库信息

export SPRING_DATASOURCE_URL="jdbc:mysql://192.168.16.142:23306/dolphin_80?serverTimezone=Asia/Shanghai&useSSL=false"

export SPRING_DATASOURCE_USERNAME="root"

export SPRING_DATASOURCE_PASSWORD="primeton"

#默认使用jdbc方式注册dolphin,且注册相关表也初始化在${SPRING_DATASOURCE_URL}参数指向的数据库中

# Registry center configuration, determines the type and link of the registry center

export REGISTRY_TYPE=${REGISTRY_TYPE:-jdbc}

export REGISTRY_HIKARI_CONFIG_JDBC_URL=${SPRING_DATASOURCE_URL}

export REGISTRY_HIKARI_CONFIG_USERNAME=${SPRING_DATASOURCE_USERNAME}

export REGISTRY_HIKARI_CONFIG_PASSWORD=${SPRING_DATASOURCE_PASSWORD}

#export REGISTRY_TYPE=${REGISTRY_TYPE:-zookeeper}

#export REGISTRY_ZOOKEEPER_CONNECT_STRING=${REGISTRY_ZOOKEEPER_CONNECT_STRING:-192.168.16.79:2181}

#修改FLINK_HOME路径

export FLINK_HOME=${FLINK_HOME:-/home/flink/flink-1.15.4}

#修改SEATUNNEL_HOME路径

export SEATUNNEL_HOME=${SEATUNNEL_HOME:-/home/seatunnel/apache-seatunnel-7.1.0}

#增加Primeton DI部署路径

export PDI_HOME=${PDI_HOME:-/home/DI/Primeton_DI_7.1.0/diclient}

export PATH=$HADOOP_HOME/bin:$SPARK_HOME1/bin:$SPARK_HOME2/bin:$PYTHON_HOME/bin:$JAVA_HOME/bin:$HIVE_HOME/bin:$FLINK_HOME/bin:$DATAX_HOME/bin:$PDI_HOME:$PATH

当使用apache-dolphinscheduler-7.1.0-standalone-bin.tar.gz和DI、Seatunnel集成时,需要在上述文件中指定PDI_HOME和SEATUNNEL_HOME。

# 3.2 修改 standalone-server/conf/application.yaml

修改org.quartz.scheduler.instanceName参数值

spring: quartz: properties: org.quartz.scheduler.instanceName: DolphinScheduler- 注册调度引擎时,引擎编码需同instanceName配置的属性值保持一致

# 5. 对接分布式或远端对象存储(可选配置)

如果用户需要使用大数据环境进行文件管理(DWS的数据开发->项目配置->文件管理),则需进行此配置。

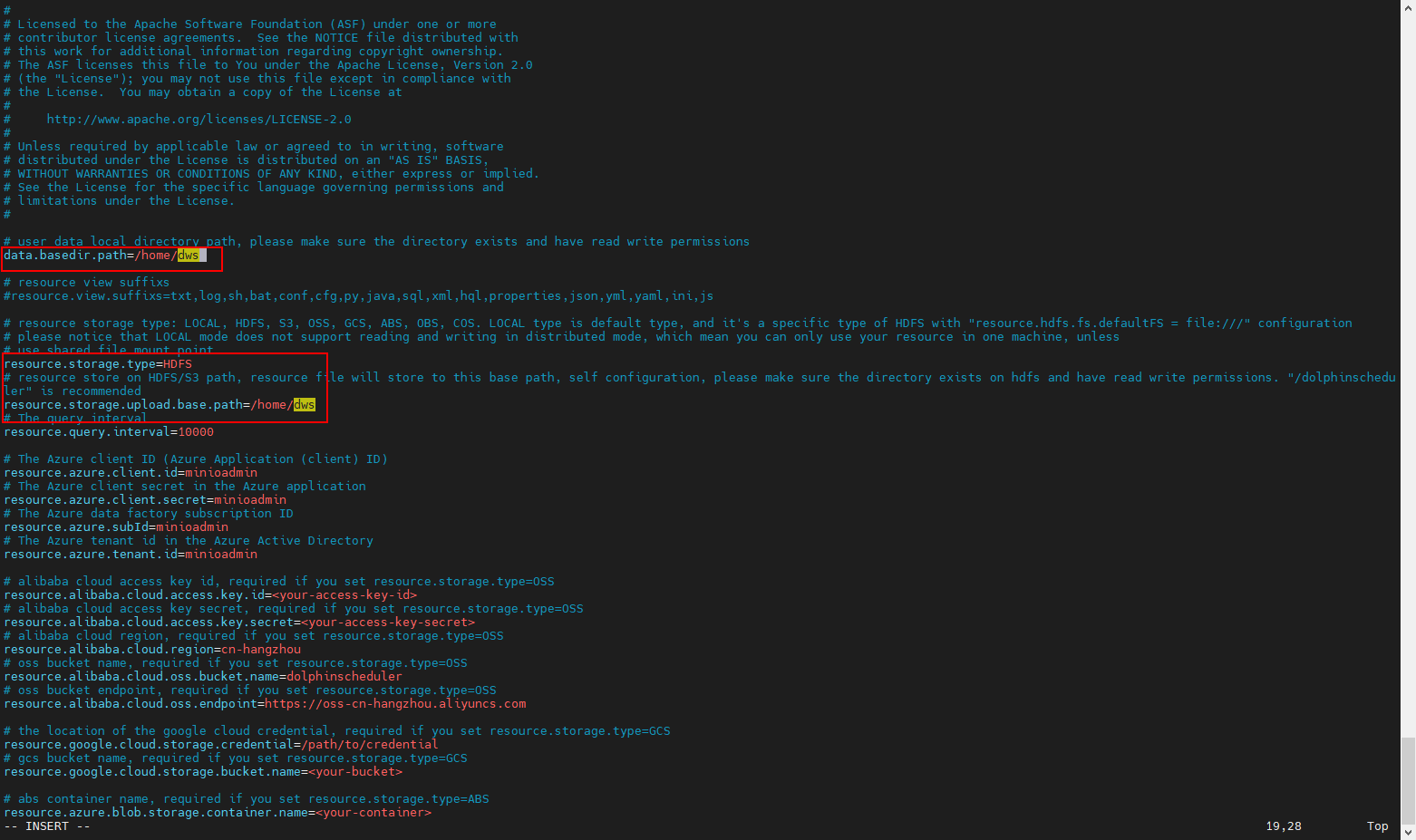

5.1. 在standalone-server的配置文件中设置远端对象存储环境,如HDFS

vi standalone-server/conf/common.properties

# user data local directory path, please make sure the directory exists and have read write permissions

data.basedir.path=/home/dws

# resource view suffixs

#resource.view.suffixs=txt,log,sh,bat,conf,cfg,py,java,sql,xml,hql,properties,json,yml,yaml,ini,js

# resource storage type: LOCAL, HDFS, S3, OSS, GCS, ABS, OBS, COS. LOCAL type is default type, and it's a specific type of HDFS with "resource.hdfs.fs.defaultFS = file:///" configuration

# please notice that LOCAL mode does not support reading and writing in distributed mode, which mean you can only use your resource in one machine, unless

# use shared file mount point

resource.storage.type=HDFS

# resource store on HDFS/S3 path, resource file will store to this base path, self configuration, please make sure the directory exists on hdfs and have read write permissions. "/dolphinscheduler" is recommended

resource.storage.upload.base.path=/home/dws

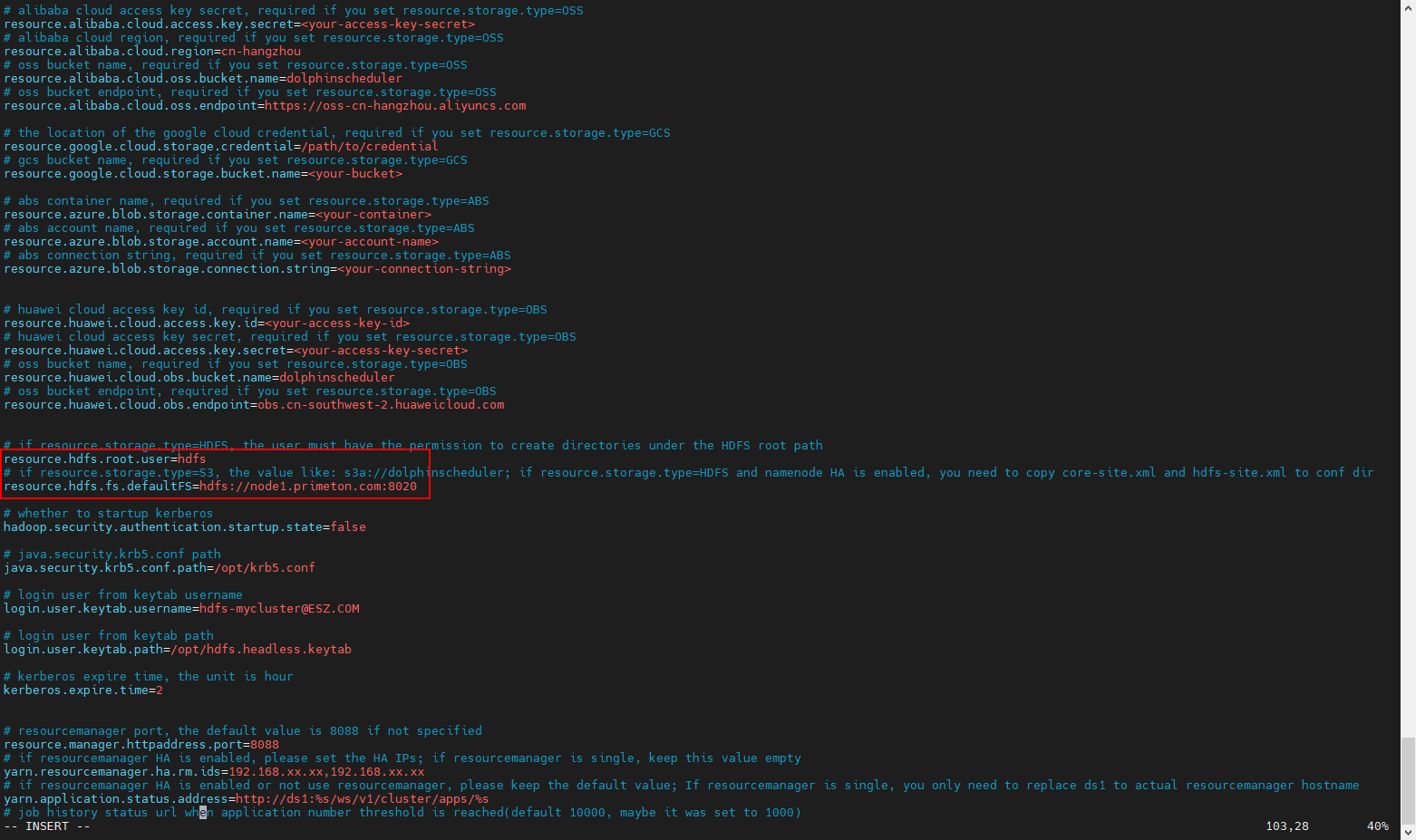

# if resource.storage.type=HDFS, the user must have the permission to create directories under the HDFS root path

resource.hdfs.root.user=hdfs

# if resource.storage.type=S3, the value like: s3a://dolphinscheduler; if resource.storage.type=HDFS and namenode HA is enabled, you need to copy core-site.xml and hdfs-site.xml to conf dir

resource.hdfs.fs.defaultFS=hdfs://mycluster:8020

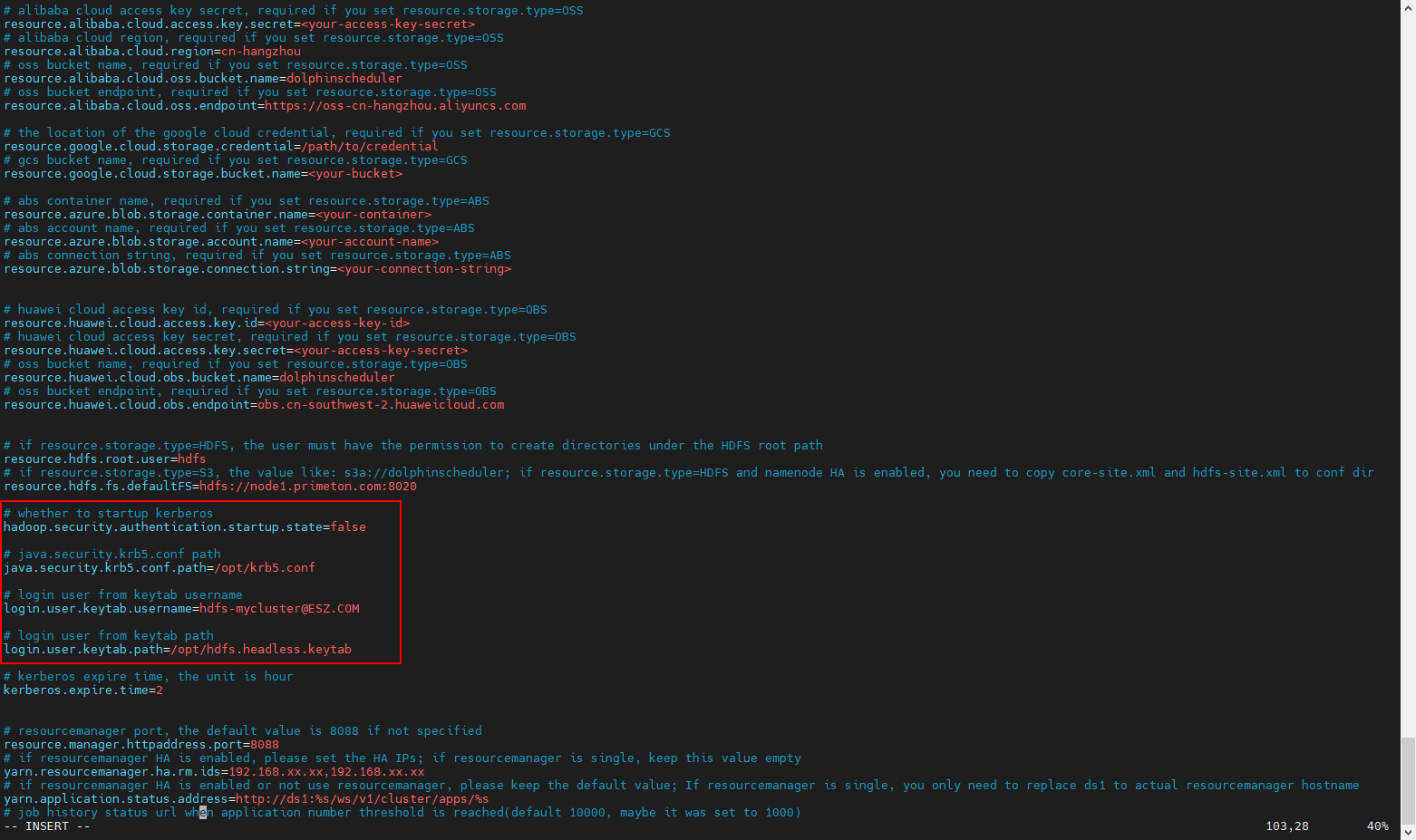

如果需要使用kerberos认证,还需要配置如下信息:

# whether to startup kerberos

hadoop.security.authentication.startup.state=true

# java.security.krb5.conf path

java.security.krb5.conf.path=/opt/krb5.conf

# login user from keytab username

login.user.keytab.username=hdfs-mycluster@ESZ.COM

# login user from keytab path

login.user.keytab.path=/opt/hdfs.headless.keytab

5.2. 将对应大数据环境的配置文件core-site.xml和hdfs-site.xml拷贝至standalone-server/conf目录下。

# 6. 初始化数据库

使用standalone-server/conf/sql/目录下对应的数据库脚本执行初始化。目前支持以下类型数据库:

达梦数据库脚本:dolphinscheduler_dm.sql

MySQL数据库脚本:dolphinscheduler_mysql.sql

openGauss数据库脚本:dolphinscheduler_openguass.sql

Oracle数据库脚本:dolphinscheduler_oracle.sql

PostgreSQL数据库脚本:dolphinscheduler_postgresql.sql

# 7. 服务启停

su - dws

cd apache-dolphinscheduler-7.1.0-standalone-bin

# 启动 Standalone Server 服务

./bin/dolphinscheduler-daemon.sh start standalone-server

# 停止 Standalone Server 服务

./bin/dolphinscheduler-daemon.sh stop standalone-server

# 查看 Standalone Server 状态

./bin/dolphinscheduler-daemon.sh status standalone-server